Sentinel-2 cloudless

Eternal summer where the sun shines every day the whole year does not exist on Earth. At least not everywhere (opens new window). And that is a good thing too since plants need the occasional rainfall to prosper and we need plants for food and oxygen. When dealing with satellite imagery though, clouds cloak the Earth's surface and the shadows they throw obscure features on the ground. From certain areas, it even is close to impossible (opens new window) to get a cloud-free view (opens new window). In the end, almost 70% of the Earth's surface is covered by clouds every day (opens new window).

But somehow, when looking at the satellite layer of one of the big mapping companies like Google, Bing or Mapbox, there are no clouds and on the whole Earth there seems to be summer. How does this work?

We answer this question in the following and explain which process we applied in the generation of our Sentinel-2 cloudless map.

The key here is to get multiple images and merge them in a way that the best pixels (i.e. no cloud or cloud shadow) are selected for the target mosaic.

One approach is, to use cloud masks for each image if available and replace the masked patches with cloud-free areas from other images. If the images are manually pre-selected it can lead to pretty good results (opens new window) however it is very likely that the image and cloud mask borders can be seen within the mosaic because light or vegetation conditions vary between images depending on the date they were acquired from. Furthermore, manual pre-selection is tedious and does not scale well for larger areas.

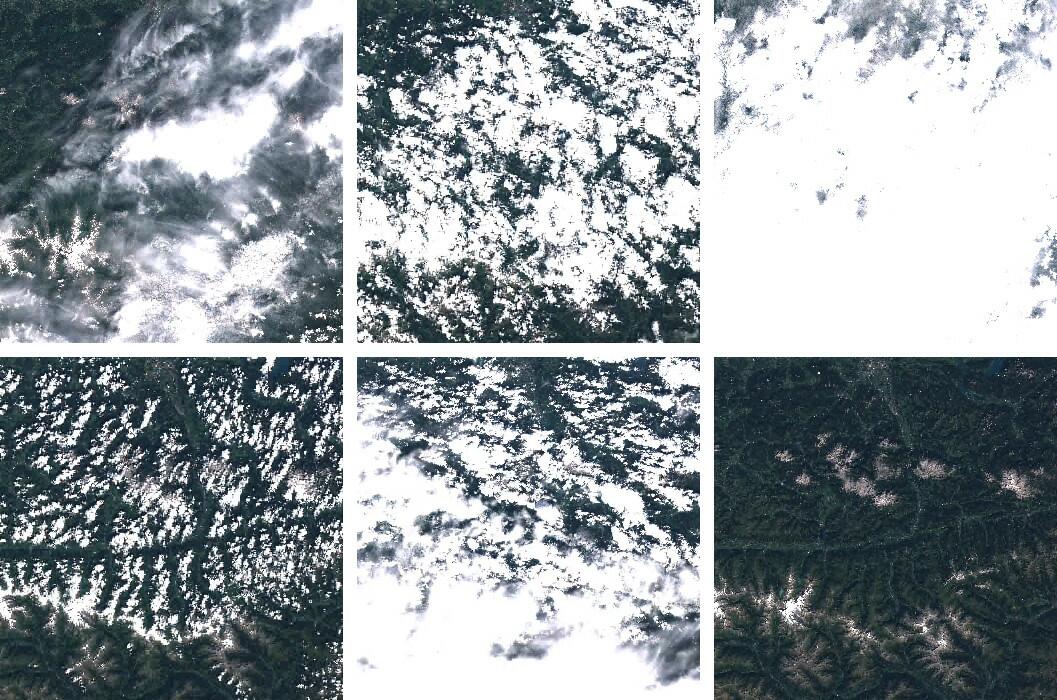

A more brutal approach is to apply the pixel sorting method used by Mapbox (opens new window) and Google (opens new window). Here, a time stack of pixels is sorted by color brightness and one or multiple pixels not showing very bright (cloud) or very dark (shadow) extremes are selected, merged and used for the target mosaic.

The latter approach has two main advantages:

- If the numbers of images within the timestack is large enough, the borders between images get smoothed out which makes the mosaic appear seamless.

- It is fully automatable.

Because we want to map the world and the results shown by Mapbox and Google looked very promising, we decided to go with the latter approach. Visit http://s2maps.eu/ (opens new window) to browse through the map.

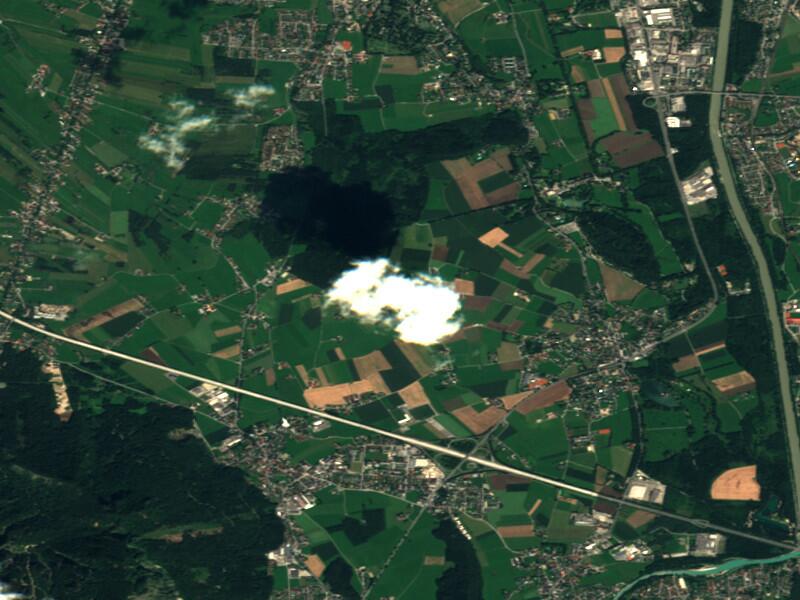

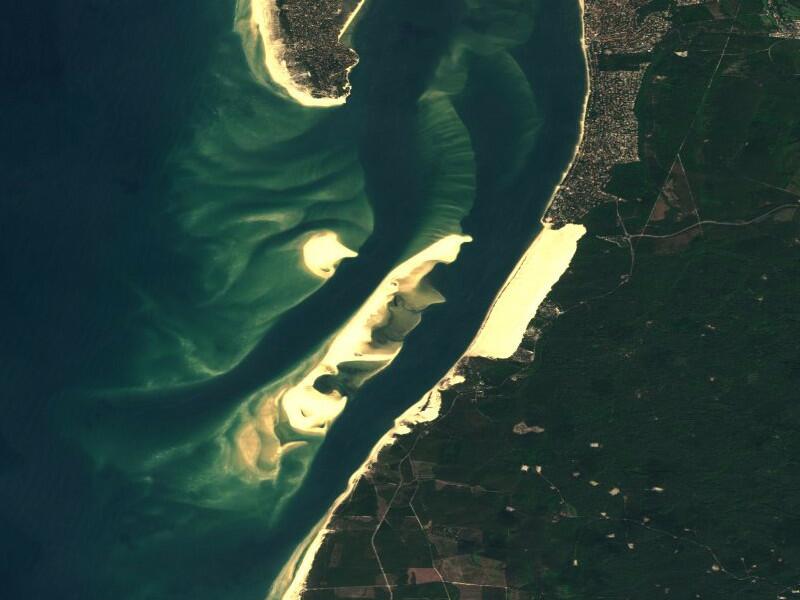

To provide a preview, this is how the result looks on selected locations:

# Data

In previous blog posts we already covered the Sentinel-2 data structure and how to get the data. To create this mosaic we decided to get the data from Sinergise hosted by AWS (opens new window) because the data access is more reliable and, more importantly, data is structured by the Sentinel tiling scheme (which is based on MGRS (opens new window)).

Unfortunately, the only product level currently available is Level-1C, which means the images are orthorectified but the image values are "top of the atmosphere" (TOA) which means that atmospheric correction has not been applied.

There is the possibility to calculate the atmospheric correction by using the semi-open-source tool SEN2COR (opens new window) but processing times per scene were too long and would have slowed down our processing chain significantly.

The main challenge here for us was to find a way do do it quicker with acceptable results. There is a great article from Planet (opens new window) describing color correction using image software and simply stretching separately the different bands to approach realistic colors. So, for this version we are using a static stretching for all of the data.

# The Tools

Processing such an amount of data requires the right set of tools. We mainly use Python as there are great packages available to handle spatial data (rasterio (opens new window)/GDAL (opens new window)) and arrays (NumPy (opens new window)). The core pixel selection algorithm, which is sort of a middle- (opens new window)out approach as described above, was implemented in Cython (opens new window) to increase performance.

Based on the experiences made during the creation of the European coverage, improvements will be made for future processing.

# Mapchete

The framework gluing it all together is developed in-house, open source and called Mapchete (opens new window). It applies a geoprocess written in Python to a large dataset by chunking the input data into smaller tiles. Look forward to a deeper introduction in a later blog post.

# Sinergise Catalog

Having access to the S3 bucket holding the Sentinel-2 archive alone is not sufficient for these processing jobs. At some point, a preselection has to be made which datasets are being used based on their geographical location and timestamp. This is where the catalog service from our friends at Sinergise come into play.

# Amazon Web Services

We used a bunch of EC2 instances to scale up the process and Celery (opens new window), a Python-based task queuing framework. This allowed us to spin up a large number of virtual machines working through the CPU intensive processing steps.

# Using The Layer

The Sentinel-2 cloudless layer is provided under a Creative Commons Attribution-ShareAlike 4.0 International License (opens new window). This means everyone is allowed and encouraged to use the layer as he/she sees fit while providing attribution that reads "Sentinel-2 cloudless (opens new window) by EOX IT Services GmbH (opens new window) (Contains modified Copernicus Sentinel data 2016)" and include the links as shown here where possible.

# WMTS Endpoint

Among other layers provided by EOX Maps (opens new window), the Sentinel-2 layer is available via WMTS and can be used in QGIS, OpenLayers or other OGC-compliant viewers: WMTS GetCapabilities (opens new window)

# Contact

Questions? Suggestions? Do you want to have your algorithm to be processed on the Sentinel-2 archive? Feel free to contact us via twitter (opens new window) or e-mail!

# Sources

All images contain modified Copernicus Sentinel data 2016.